Table of contents

Table of contents

I’m rebuilding my SaaS from Bubble.io to Next.js, and I needed a way to verify that the new version matches the original down to every button, label, and dropdown. So I built an automated system that crawls both apps, extracts every visible element, compares them, and fixes differences one at a time. 485 commits, 61 audit cycles, 14 user flows. Here’s how it works, what it’s made of, and what it found.

The rebuild isn’t the hard part

The decision to rebuild gets all the attention. Should you rewrite? Migrate gradually? Those debates are fine, but they skip the harder question: once you’ve decided, how do you actually verify the new version matches the old one?

I run Premierely, a booking platform for SoundCloud premieres and reposts. 282 users depend on it daily. The Bubble version has 14 distinct user flows across two roles – channel owners and artists. Dashboard tables with filters and context menus. A track submission pipeline. Scheduling with time slots. A built-in chat system. Five different settings pages. Download gates with dark-themed public pages. Each flow has dozens of buttons, labels, dropdowns, badges, and states that users interact with every day.

Before writing any rebuild code, I ran the entire Bubble app through Buildprint to extract its full structure. Data types, workflows, page layouts, option sets, styles – all of it, turned into documentation the agent could build from. No clicking through Bubble screen by screen to reverse-engineer what exists. The agent had a complete map before writing line one.

The real risk in a platform migration isn’t whether the code is good. It’s the hundreds of small UI details your users depend on that you’ve forgotten exist. A context menu showing “Send new request” instead of “Edit” and “Reject” means a channel owner can’t reverse a decision. Not a cosmetic issue. A broken workflow.

I use Premierely every day to run premieres at my label Marked. I’d eventually notice every missing label and wrong color myself. But “eventually” means months of manual discovery while my users hit the same problems. I needed a system that finds every difference before a single user does.

What the parity loop does

The parity loop is a 10-phase cycle that runs autonomously inside my development environment. It picks a user flow from a queue, then audits both the source app and the target app using the same protocol. Browser automation navigates to each screen, takes a screenshot, and extracts every visible element it can find. Form fields, opened dropdowns, toggles, table columns, row actions, badges, icons, filters, search inputs, empty states, validation errors, loading states, modals, and toasts. Then it interacts with every clickable element to uncover hidden states – menus that only appear on hover, panels that expand on click, dialogs that open from buttons.

Both apps get the same treatment. The result is two extraction documents that catalog every element on the screen. The system compares them side by side and produces a gap document. Each gap gets a priority from P1 (breaks a core workflow) down to P4 (minor cosmetic difference).

Then it fixes one gap at a time. Each fix is a single commit. After fixing, it deploys the change to the live rebuild and re-audits to confirm the fix worked. If it didn’t, it tries again.

The pass system is what makes this actually work. After all 14 flows are audited and gaps are fixed, the system doesn’t stop. It resets every flow and re-audits everything from scratch. Fixing a gap in one flow can break something in another – a CSS change to the chat bubble might affect the same component used in the track review dialog. The loop only exits when a complete pass across all 14 flows returns zero gaps.

Settings-integrations hit zero gaps on its first audit. Full parity out of the box. Review-accept-reject surfaced 14 gaps in a single cycle, including 3 P1 items that blocked core workflows.

What it’s built from

The parity loop isn’t one tool. It’s four components wired together into a state machine.

The first piece is a browser automation layer. I use Playwright and Stagehand to navigate both apps, log in as different user roles, click through every menu and dialog, and take screenshots. The browser sessions are short-lived – they timeout every few minutes, so the system has to be resilient to dropped sessions and pick up where it left off.

The second piece is an extraction engine. After the browser captures a screen, an AI agent reads the screenshot and catalogs every visible element into a structured markdown document. Not just what’s on screen – it opens every dropdown, expands every collapsible section, hovers over every interactive element. The goal is to surface things that would only appear through interaction, not just observation.

Underneath both of those sits the same Buildprint data that powered the initial build. 14 JSON contracts, one per flow, listing every element, dropdown option, visibility rule, and workflow that should be on each screen. The browser discovers what’s visible. The contracts know what should be there. When a contract says a dropdown should have three options and the extraction only finds two, the system flags it before anyone clicks anything.

Third is the comparison logic. Two extraction documents go in alongside the contract data, and a prioritized gap list comes out. Each gap gets a P1 through P4 priority based on whether it breaks a workflow, affects trust, or is purely cosmetic. The gap documents are just markdown files – easy to review, easy to diff between cycles.

The fourth piece is the state machine itself. A JSON file tracks which flow is active, which phase the loop is in, how many gaps were found, how many are fixed, and what pass we’re on. The autonomous development loop reads this file at the start of each iteration, executes one phase, updates the state, and exits. Next iteration picks up from the new state. That’s what makes it resumable – the loop can crash, restart, or run across multiple sessions without losing progress.

I’m not going to walk through the exact implementation because the details are specific to my setup and my autonomous coding environment. But the architecture – browser crawl, AI extraction, structured comparison, state-tracked fix loop – is portable. If you’re doing a migration complex enough to need this, the components are available. Wiring them together is the creative part.

The gaps you never see coming

The categories matter more than the individual gaps, so that’s how I’ve grouped them.

Broken workflows. The accepted track context menu showed “Send new request” instead of “Edit” and “Reject.” A channel owner who accepted a track had no way to reverse it. The track review dialog was missing the “Message from artist” section entirely – the owner reviewing a submission couldn’t see what the artist wrote. The chat sidebar listed conversations by track title instead of channel name, which meant both roles were looking at the wrong identifier.

Trust erosion. The chat message bubble used a dark primary background instead of the source app’s light blue. System messages used the wrong colors – submit notifications were amber instead of gray, publish notifications were green instead of orange. Colors carry meaning in a messaging interface. Users who see their messages in a different color feel like they’re using a different product. The time slot grid showed 11 slots covering 7am to 5pm instead of 16 slots covering 7am to 10pm. Channel owners scheduling evening premieres couldn’t see those slots at all.

The ones nobody would report. The open chat count badge was red instead of blue. Details panel labels said “Submitted by” and “Channel owner” instead of “Contact person” and “Artist name.” The “Also send as email” checkbox only appeared for owners instead of both roles. None of these crash the app. None show up in automated tests. But they accumulate, and 117 of these small differences across 14 flows add up to a product that feels off. Users can’t explain what changed – they just know something isn’t right.

The code compiles. The pages load. Every route works. But parity isn’t about whether the app runs. It’s about whether the app feels identical to what your users already know.

What 485 commits and 61 cycles proved

485 commits tagged with the PARITY-LOOP prefix since March 1st. 61 audit cycles completed, each covering a full flow through audit, compare, fix, and verify. 14 user flows audited across two roles. 117 total gaps found in pass 2 alone – after pass 1 already fixed the worst issues.

The complexity distribution surprised me. Review-accept-reject needed 33 fixes, the most of any flow. Published gate needed 26 fixes (dark theme styling, email collection, multi-step verification). Scheduling needed 17. Settings-integrations needed zero. Some flows match the original almost perfectly. Others are off by dozens of details.

The pass system proved its value. After completing all 14 flows, the system resets everything and re-audits. That’s how you catch regressions. A fix to the chat component might affect the same component in track review. Without re-auditing, you’d never know.

Some gaps turned out to be intentional improvements in the rebuild. Better sort panels, multi-select filters, a cleaner navigation structure. The parity loop flags those too. You have to explicitly mark them as non-gaps, which forces you to be deliberate about it. Every difference between source and target is either a mistake you need to fix or a choice you need to justify. No hiding behind “we’ll get to that later.”

The cost is real. Browser automation crawling two apps, an AI agent analyzing screenshots and extraction data, deployment loops after every fix. Each cycle takes 10 to 20 minutes of autonomous execution. The system runs inside my Ralph Wiggum Loop – autonomous Claude Code sessions that work without my input. Impractical as a manual process.

When this approach makes sense

Not every migration needs this. If you’re building a new product from scratch, there’s no source to compare against. If you’re intentionally redesigning the UX, parity isn’t the goal. If your app has three screens and a settings page, a manual checklist covers it.

The parity loop makes sense when three things are true. You’re migrating a production app where real users depend on specific workflows (282 people use Premierely daily – they know where every button is). Your app is complex enough that manual testing will miss details (14 flows across two roles with conditional menus and nested dialogs is too much for a spreadsheet). And you have browser automation plus an AI tool that runs autonomously, because the parity loop works because it doesn’t need me clicking through screens. It audits both apps, compares them, and produces gap documents while I sleep.

The parity loop removes human judgment from “is this done?” The audit extracts what exists. The comparison finds what’s different. You never decide if the rebuild is “close enough.” You know exactly where it stands, down to the color of a chat badge.

What’s next for the rebuild

Pass 2 is in progress. Currently fixing gaps in the chat flow – 8 of 14 resolved so far. After pass 2, pass 3 starts. The goal is a complete pass with zero gaps across all 14 flows.

Every premiere at Marked still runs through the Bubble version. I process bookings, review submissions, schedule uploads, and manage payments through the original app every day. The switchover to Next.js has real stakes. I’m not migrating a demo. I’m migrating the tool I run my label with.

When a full pass returns zero gaps, the rebuild ships. Not before. If you want to see the current version, visit premierely.io. If you’re following the rebuild, I’m documenting the whole thing on LinkedIn.

📨

Subscribe to my newsletter to get actionable tips to improve your website.

👋 Hey, thanks for reading all the way through

Please join me in the comments below and get a reply within the day.

Related articles

Gino Gagliardi

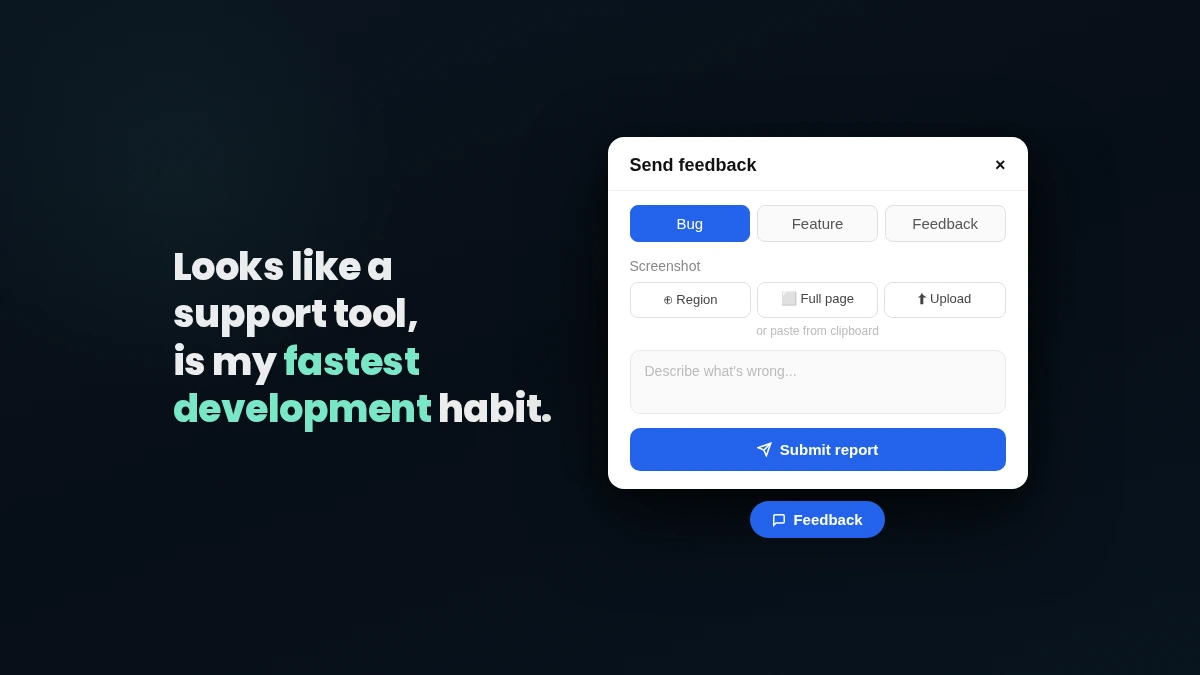

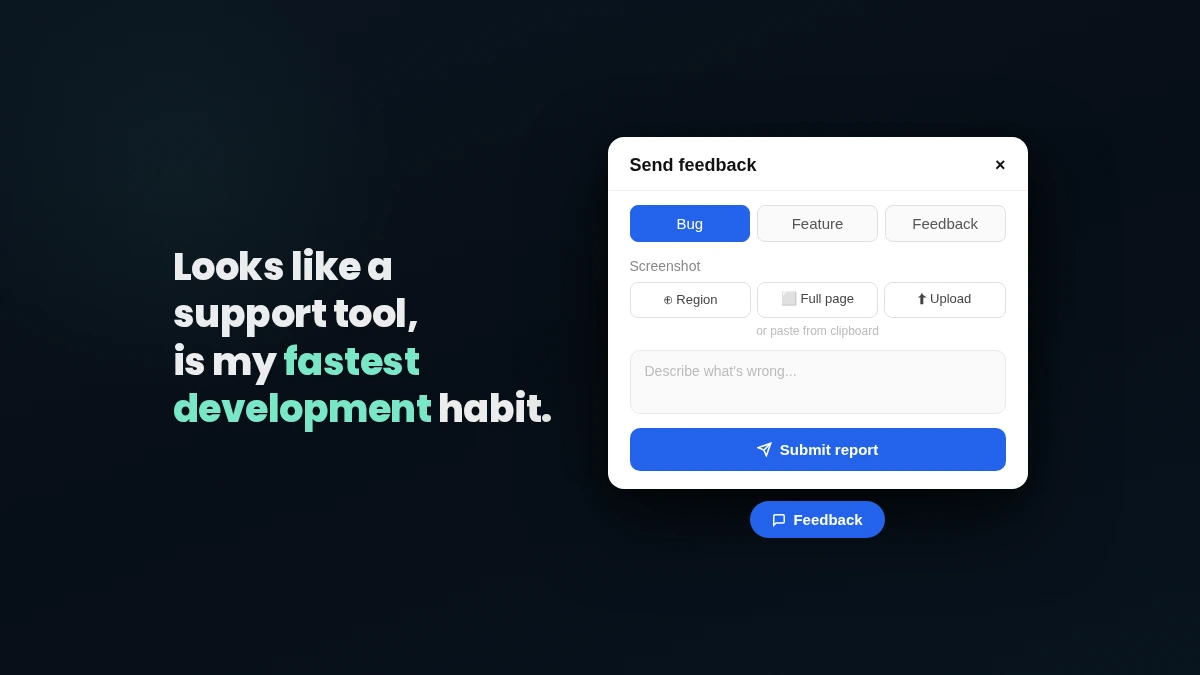

My fastest Claude Code habit is a support widget

·

·

My fastest Claude Code habit is a support widget

-

Gino Gagliardi

- March 24, 2026

Gino Gagliardi

Advanced UTM Tracking (Funnel Split Framework)

·

·

Advanced UTM Tracking (Funnel Split Framework)

-

Gino Gagliardi

- June 1, 2024

Gino Gagliardi

Hook Rate and Hold Rate (The 2025 Guide)

·

·

Hook Rate and Hold Rate (The 2025 Guide)

-

Gino Gagliardi

- May 31, 2024

Gino Gagliardi

Internal Link Juicer for WordPress (Why It's a No-Brainer)

·

·

Internal Link Juicer for WordPress (Why It's a No-Brainer)

-

Gino Gagliardi

- May 4, 2024